Overview

I joined Augur as the founding product designer, responsible for transforming an early-stage AI proof of concept into a scalable SaaS platform.

Augur applies artificial intelligence to existing CCTV infrastructure, enabling automated detection of people, events, and behavioural patterns—shifting security from reactive monitoring to proactive insight.

My role

Founding Product Designer

Scope

Product strategy, UX/UI design, design system

Stage

Early-stage startup (pre-seed → seed)

Outcome

Investor-ready product contributing to $15M seed funding

The problem

Traditional CCTV systems are fundamentally limited:

- They rely on humans to monitor and interpret footage

- Investigations require hours of manual video review

- Insights are lost when footage is deleted

- Systems are reactive, not preventative

The result: Security teams spend more time reviewing the past than preventing the future.

The opportunity

Augur’s technology could:

- Detect people, behaviours, and patterns automatically

- Surface real-time alerts and insights

- Turn static footage into searchable, queryable data

The challenge was designing a product that could make complex AI outputs usable, intuitive, and actionable.

My role

As the founding designer, I:

- Defined the product vision and UX strategy

- Translated a technical prototype into a usable SaaS platform

- Led discovery, concept exploration, and interaction design

- Worked closely with the founder and engineers

- Built the foundations of a scalable design system

Discovery & Alignment

Step 1: Rapid response

In my first week, I delivered design work to support a live client presentation, quickly getting up to speed with the product and domain.

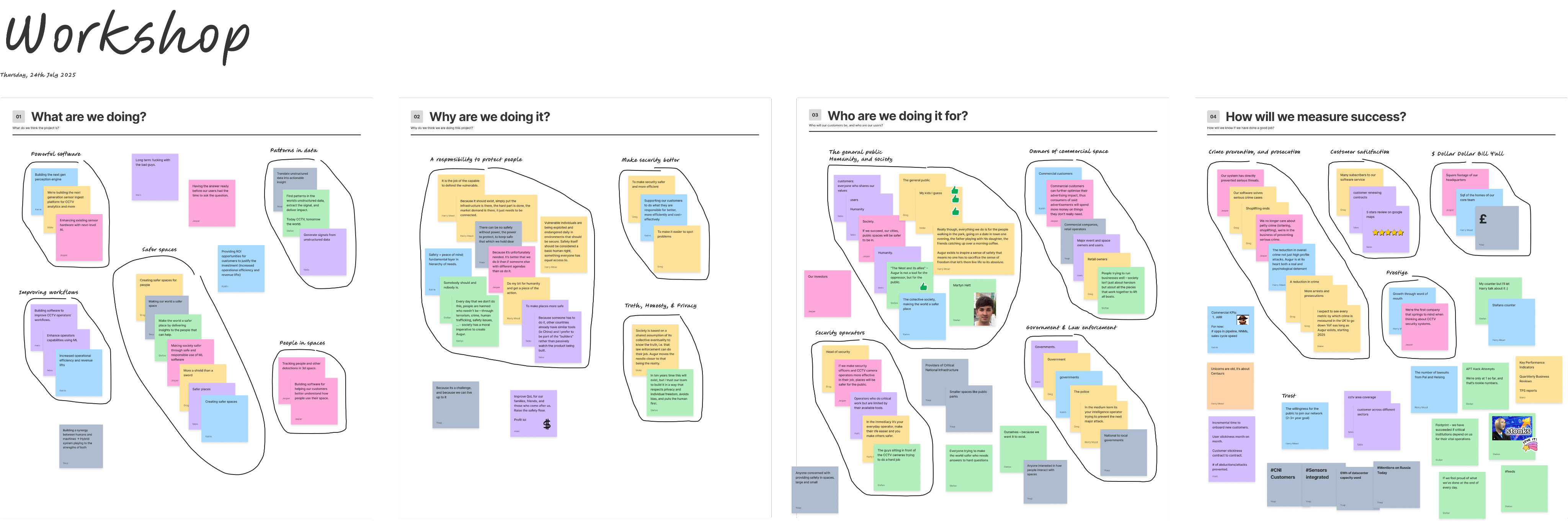

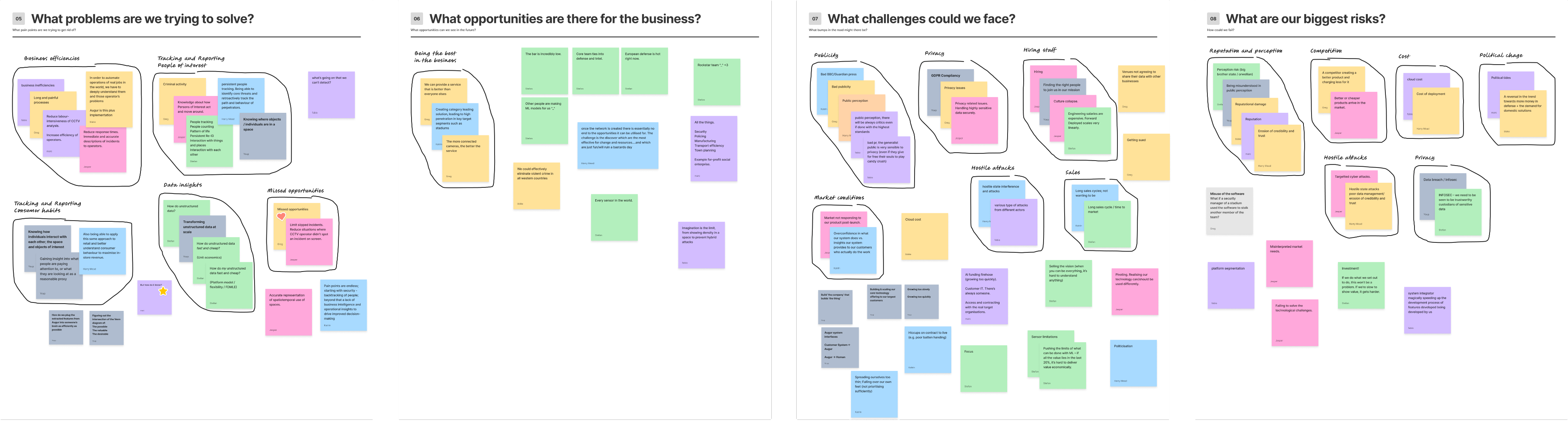

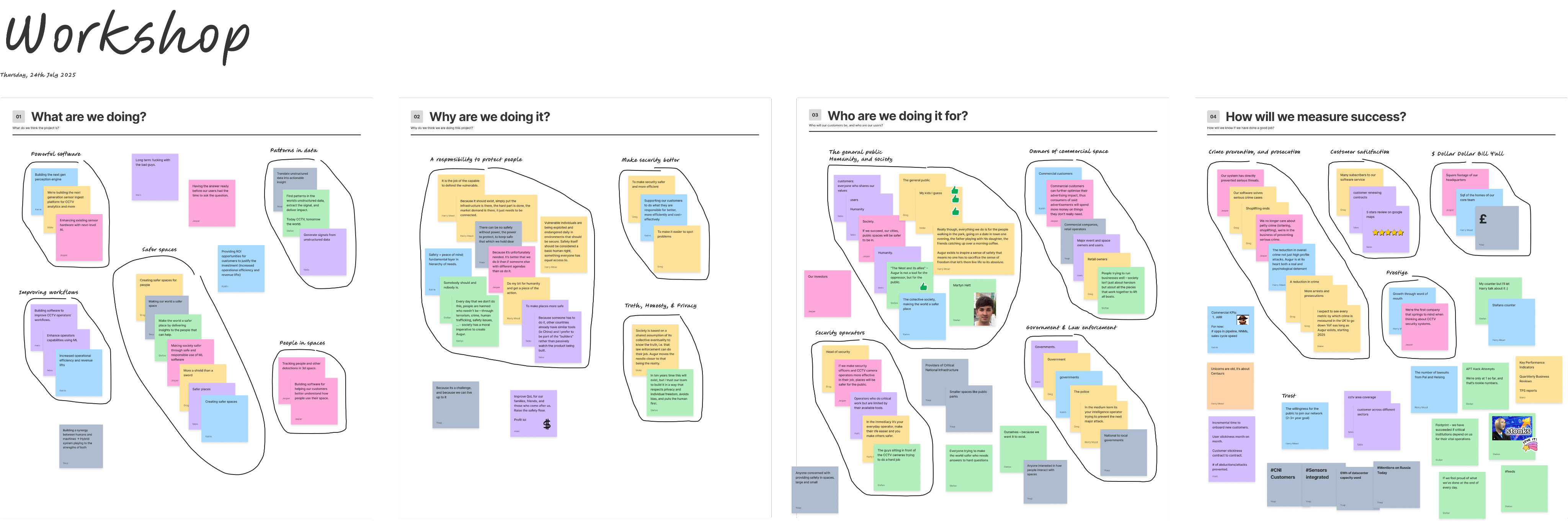

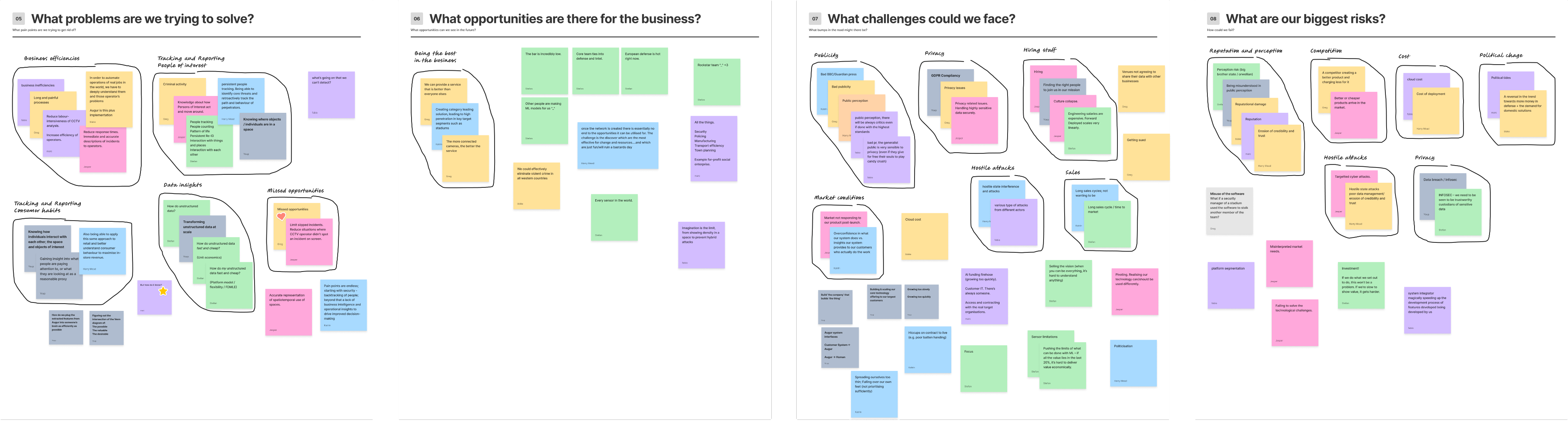

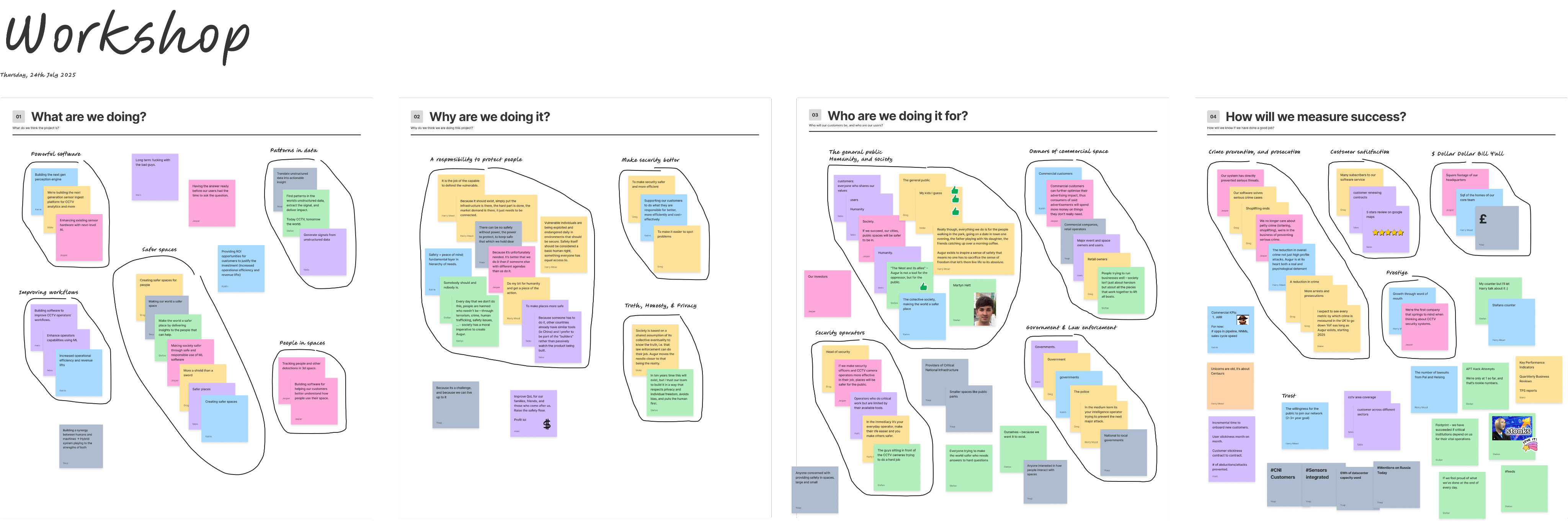

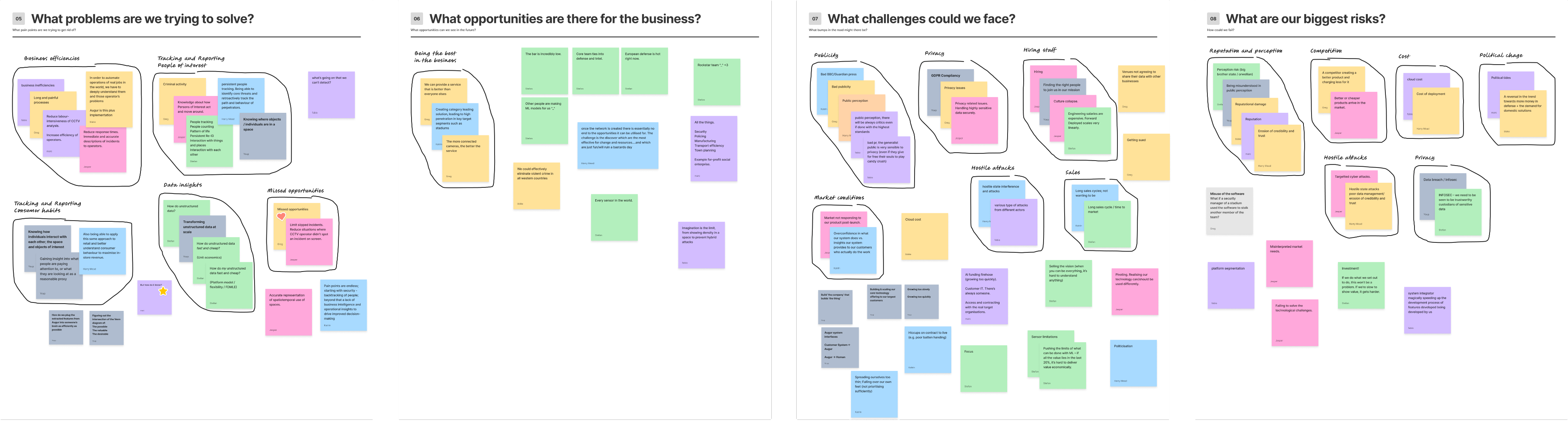

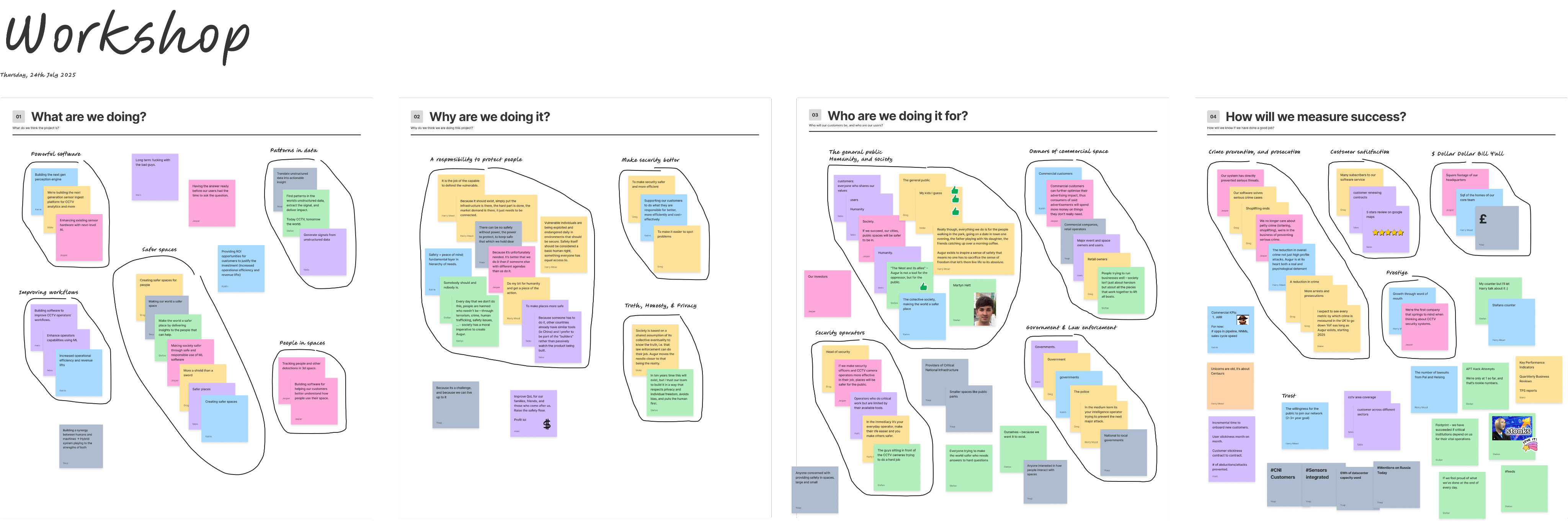

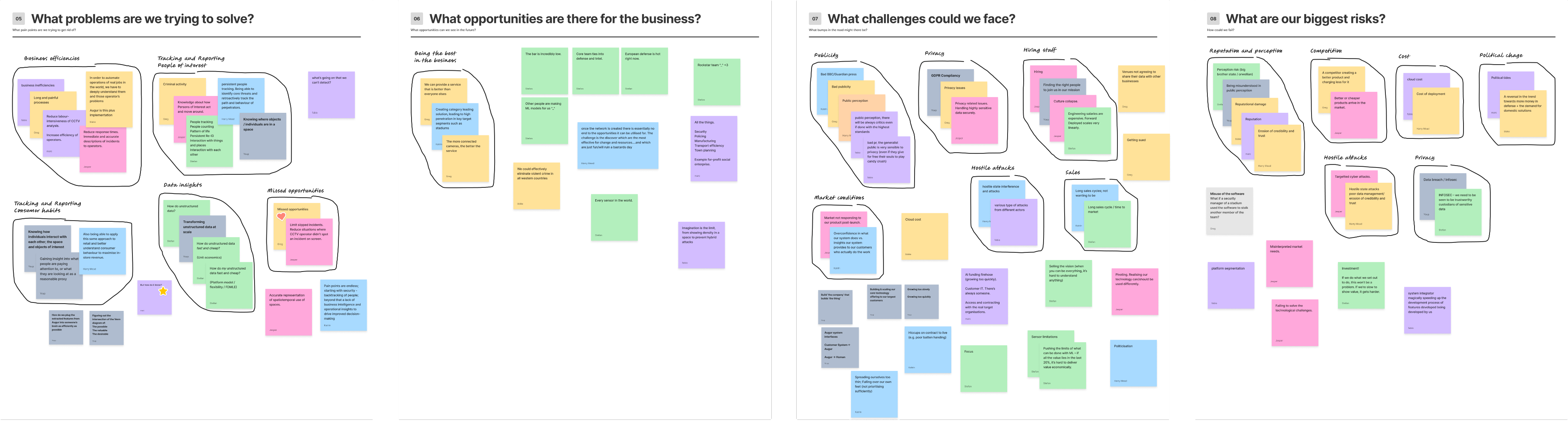

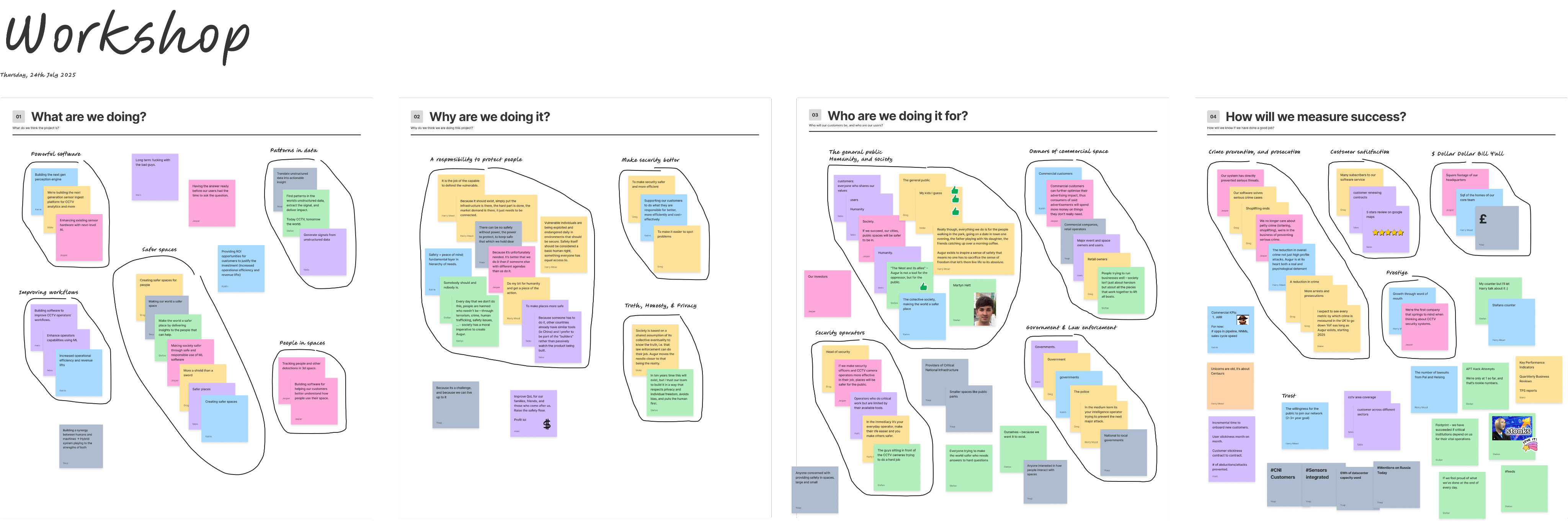

- Step 2: Defining the problem space

- I facilitated a cross-functional workshop to align the team on:

- What we’re building and why

- Who it’s for

- What success looks like

- Risks, constraints, and opportunities

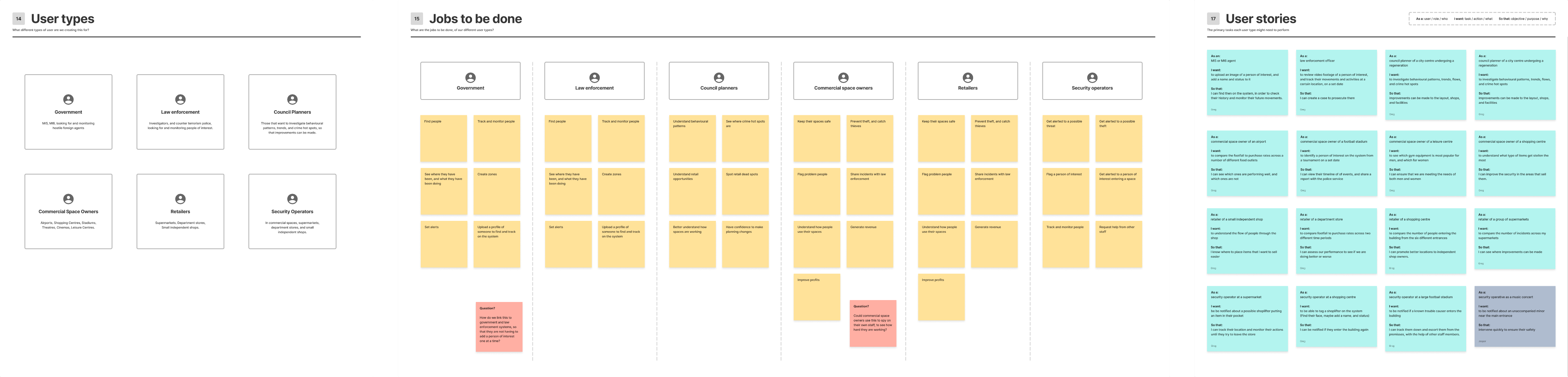

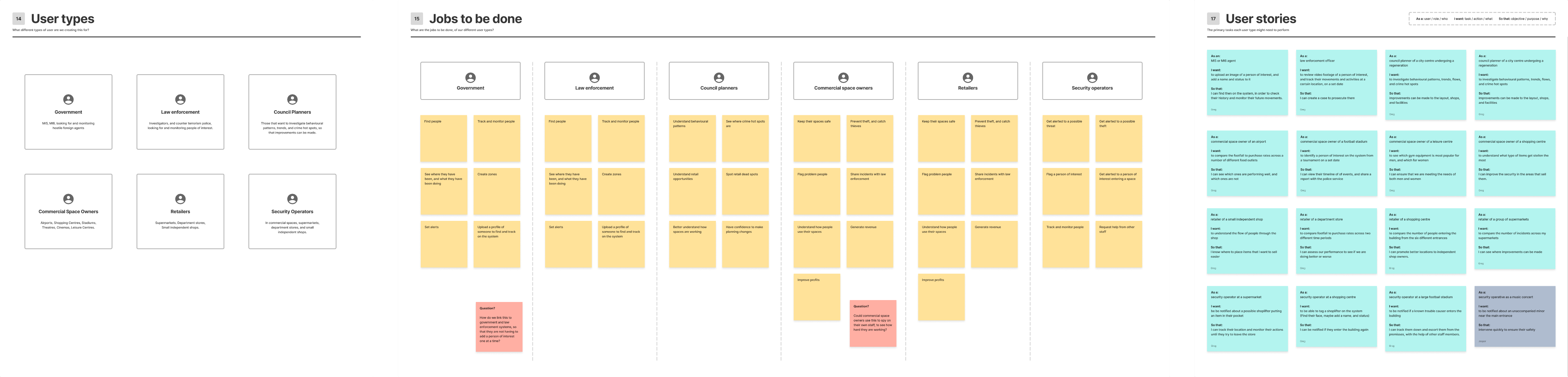

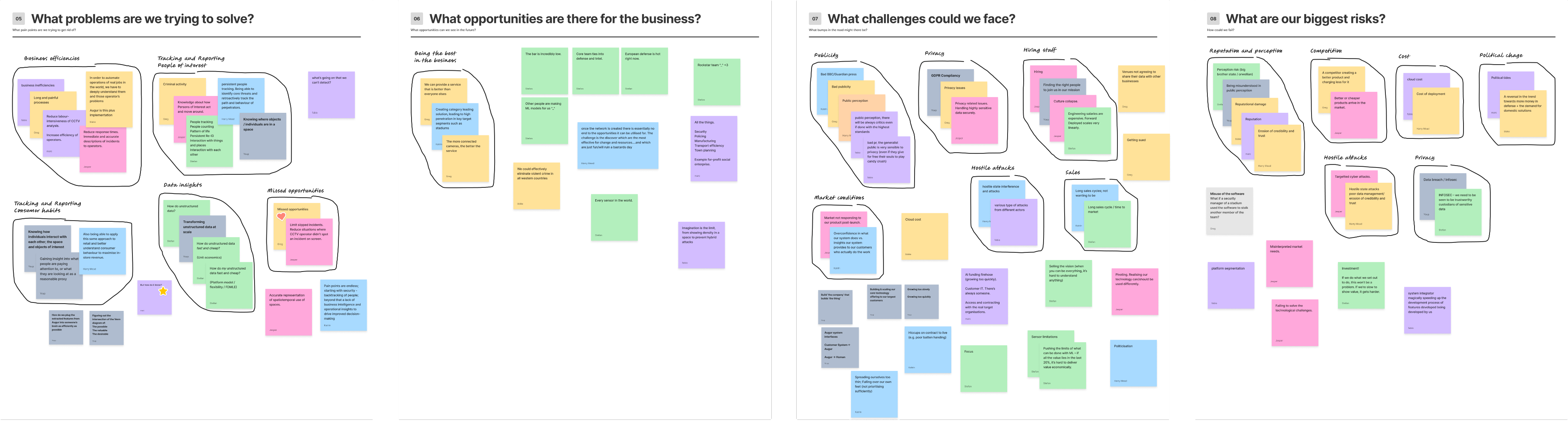

- We mapped:

- User types (security operators, analysts, business owners)

- Jobs to be done

- Potential applications across industries

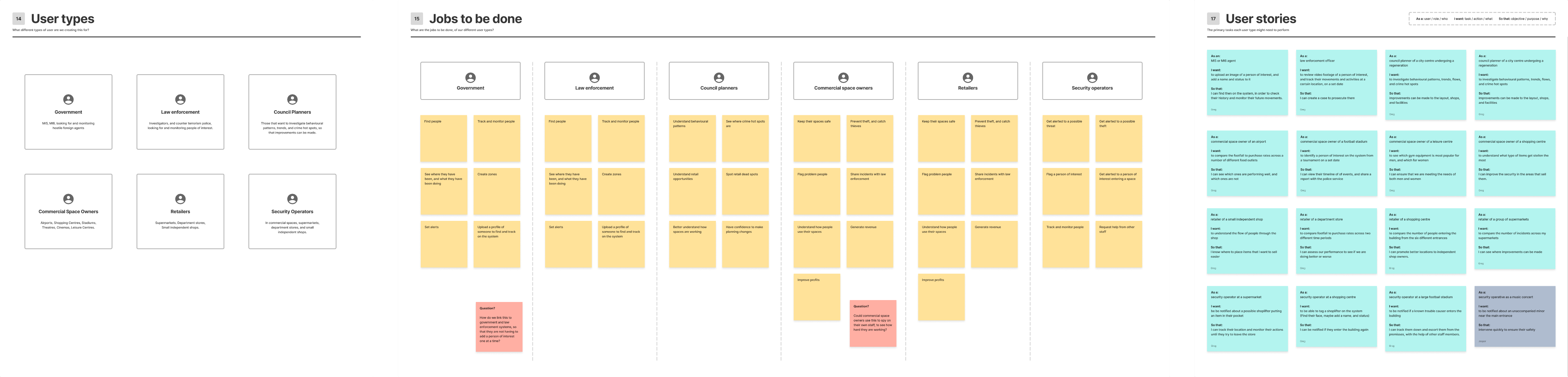

Shaping the product

Insights from discovery were translated into:

- User stories

- End-to-end journeys

- Core interaction flows

This helped define a product focused on three key activities:

- Monitoring (what’s happening now?)

- Investigation (what happened?)

- Analysis (what patterns exist?)

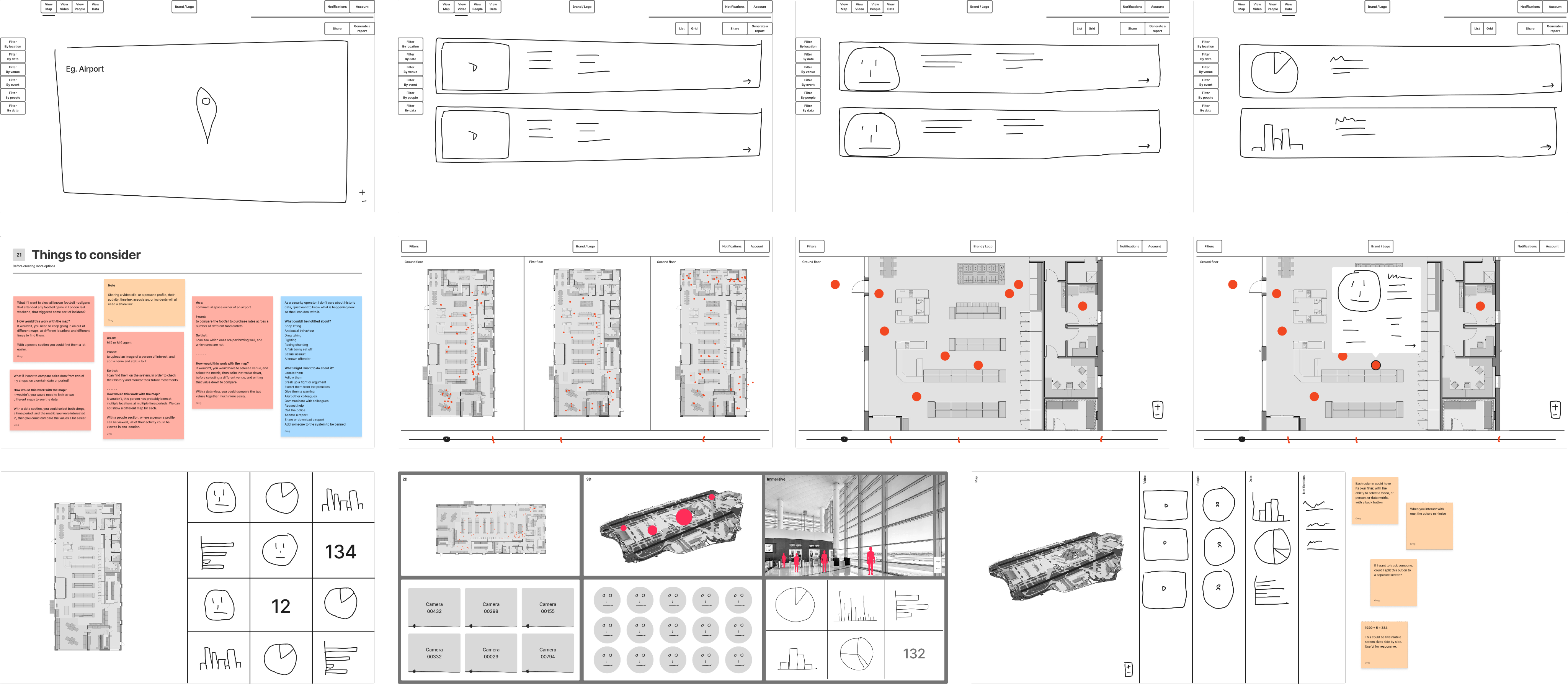

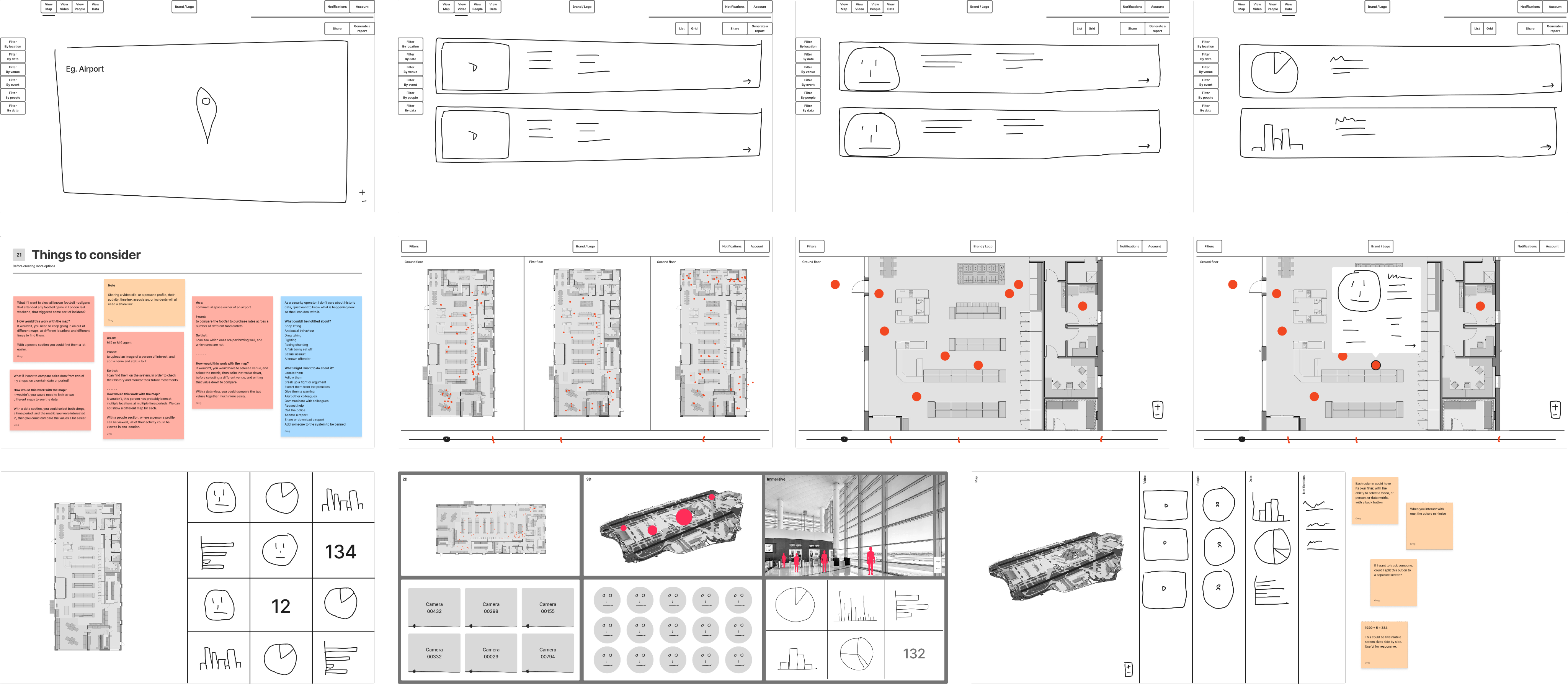

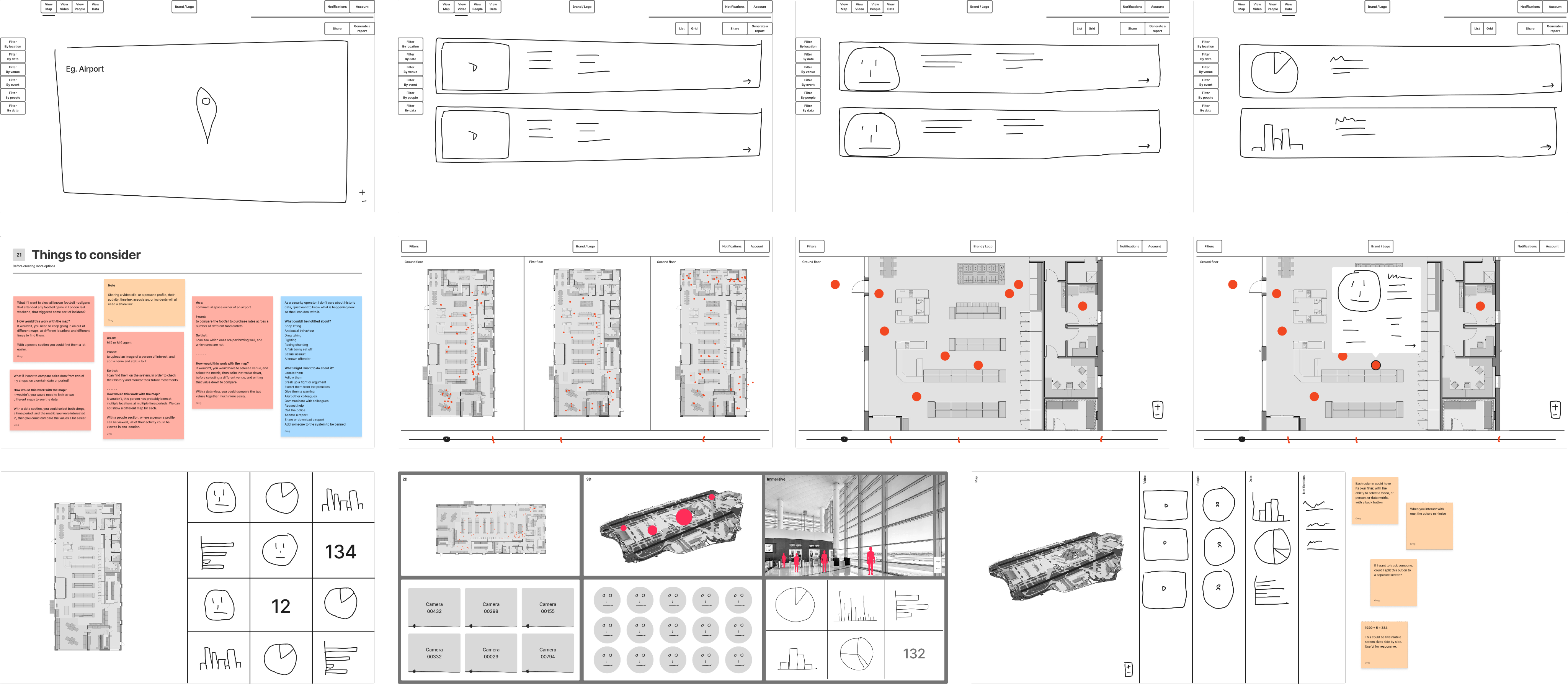

Design exploration

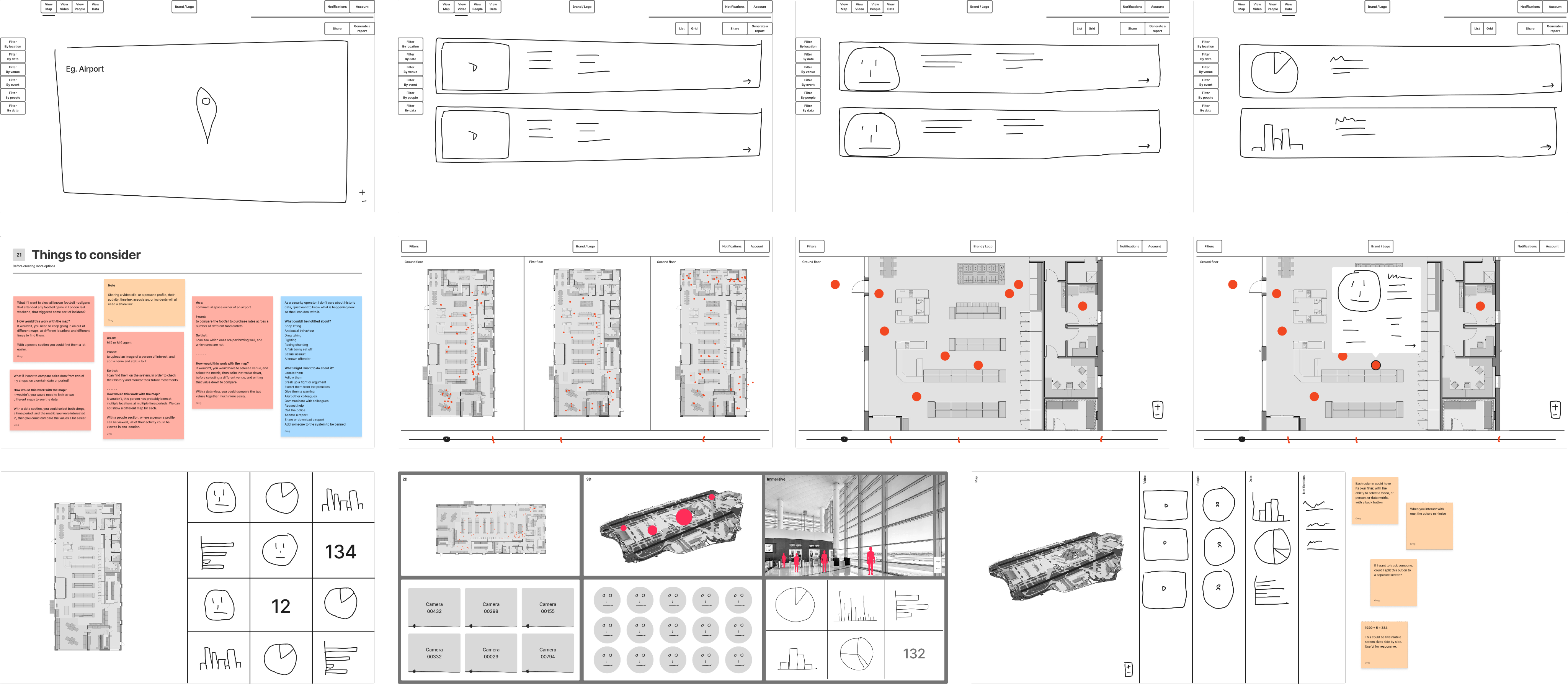

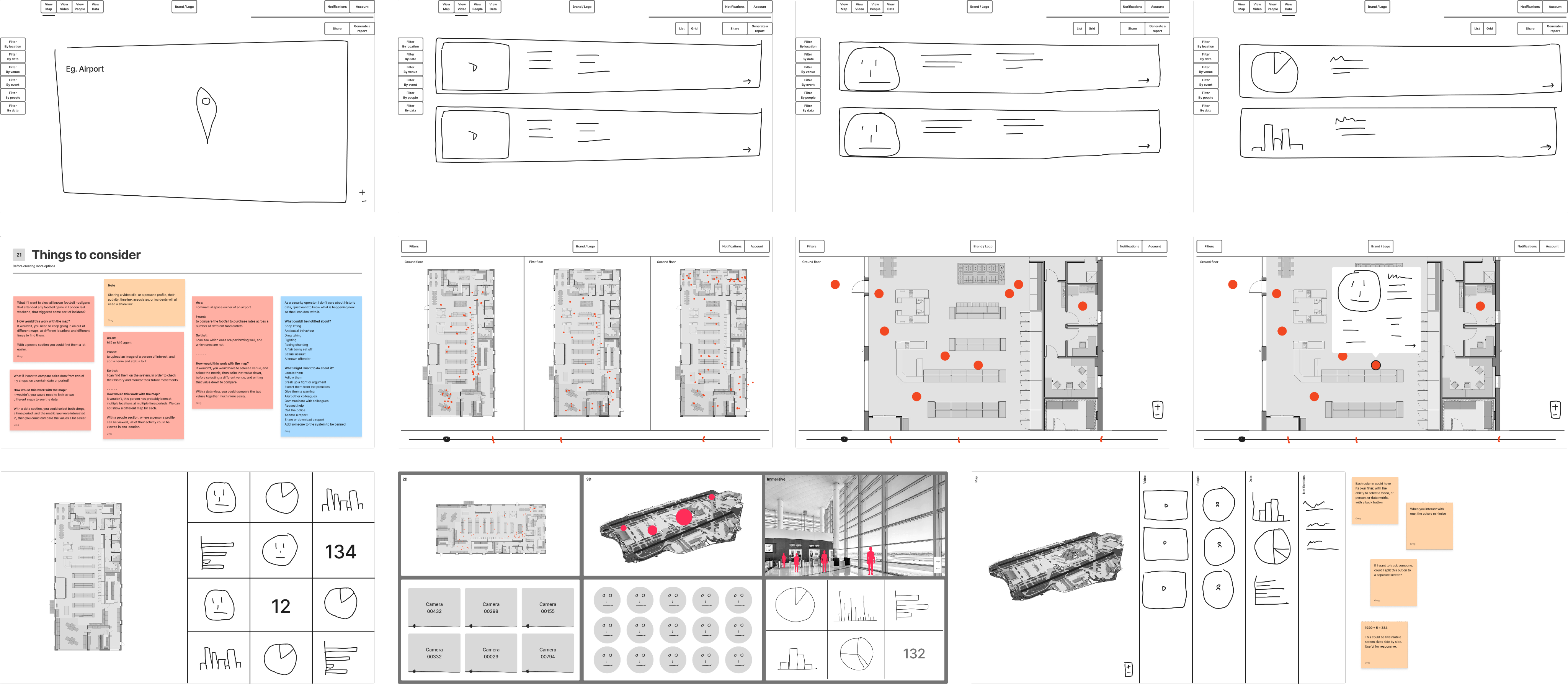

I explored 7 different product directions, each tackling how users might:

- Navigate physical spaces

- Interact with time

- Understand AI-detected events

- Move between data and video

We reviewed concepts collaboratively, assessing trade-offs between:

- Simplicity vs capability

- Spatial vs list-based interfaces

- Real-time vs historical interaction models

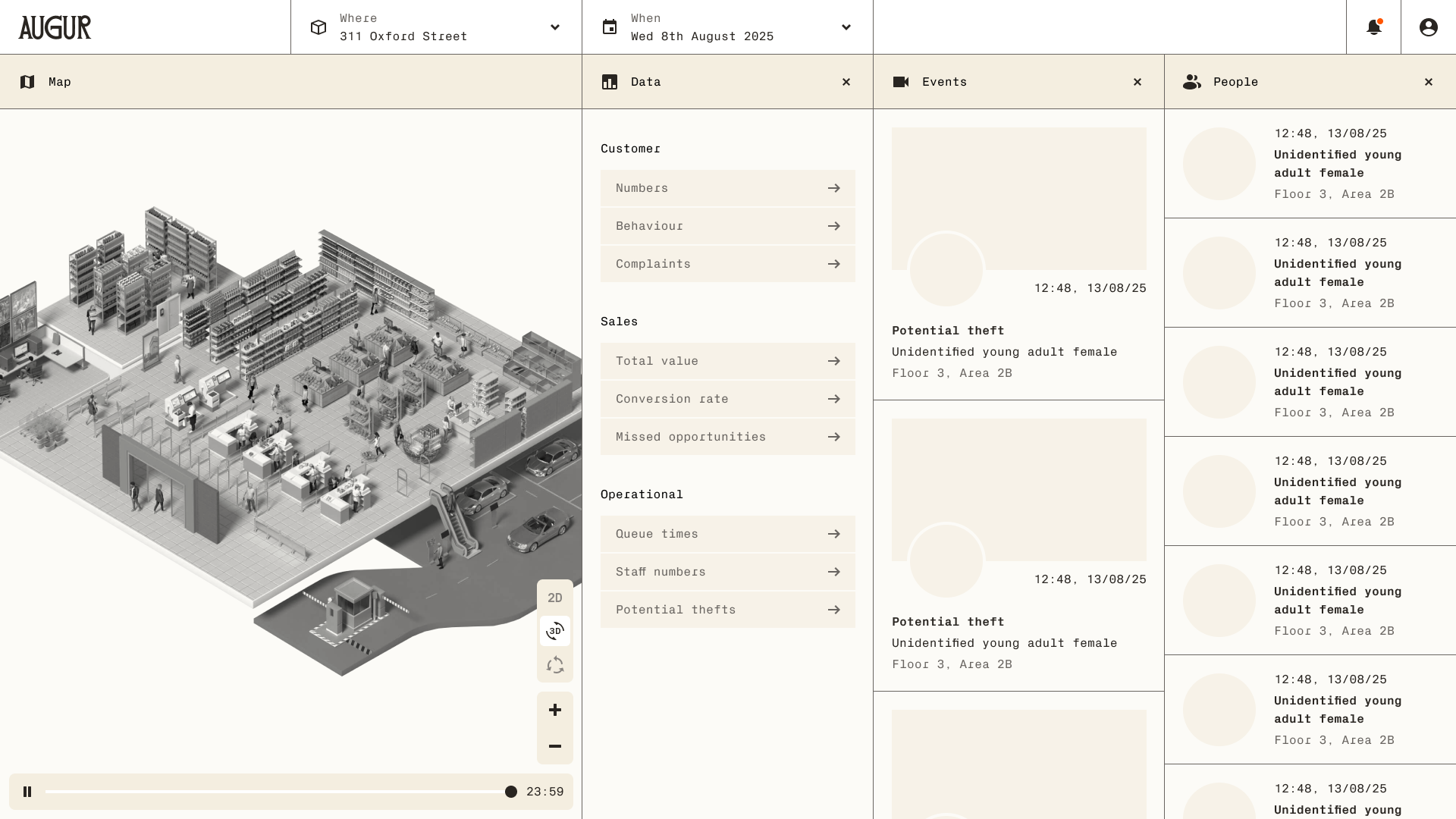

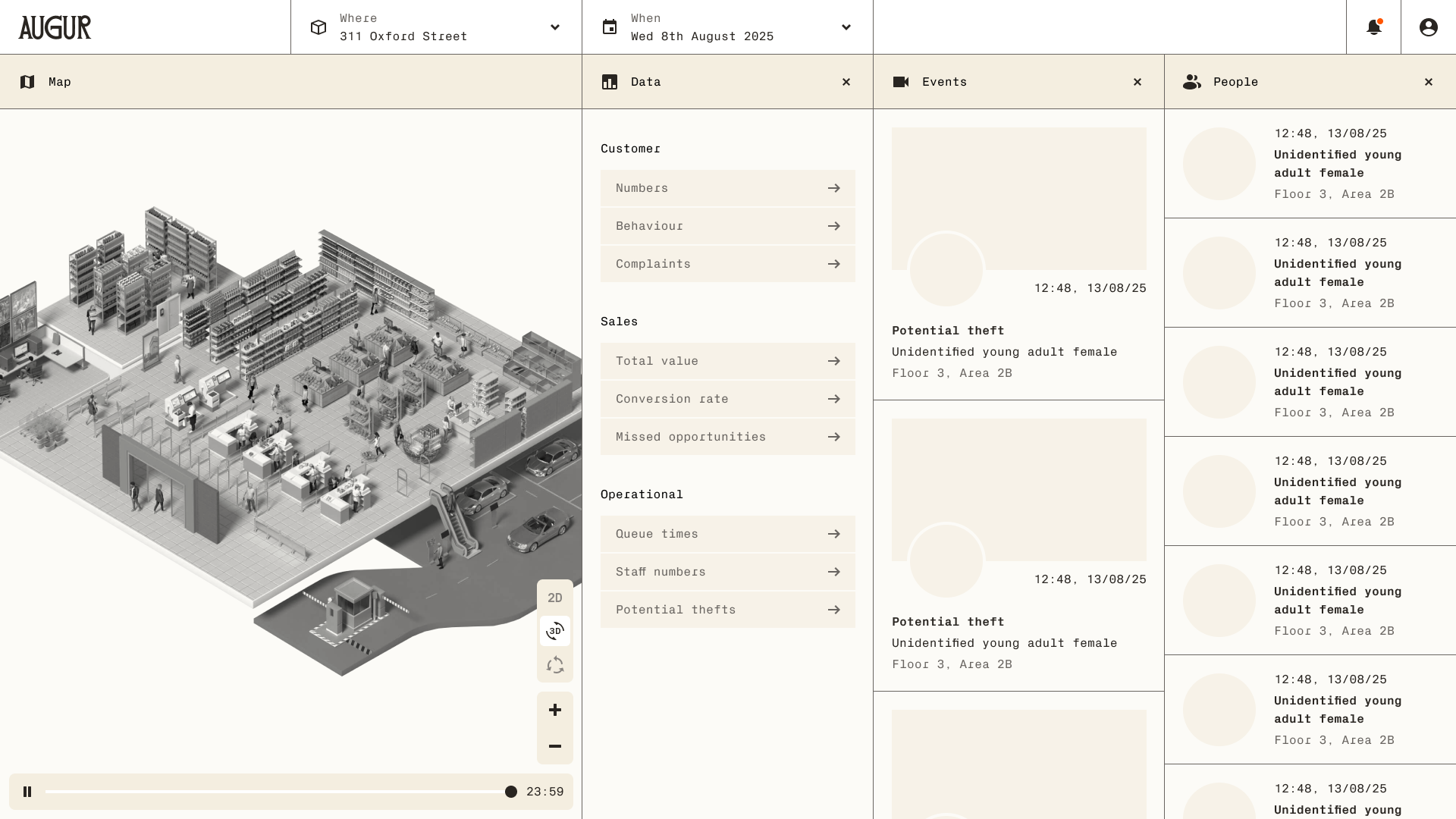

Designing the core experience

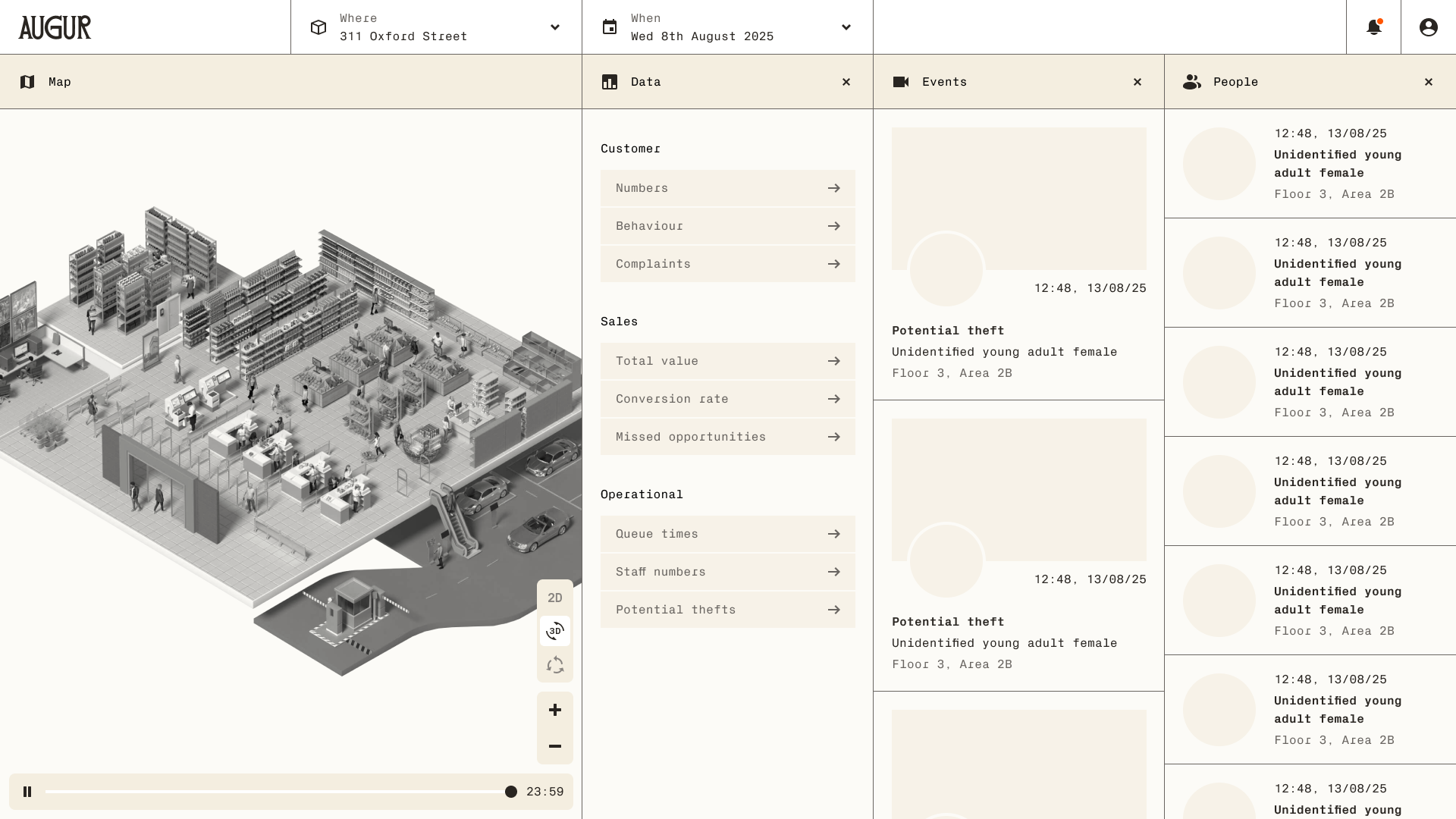

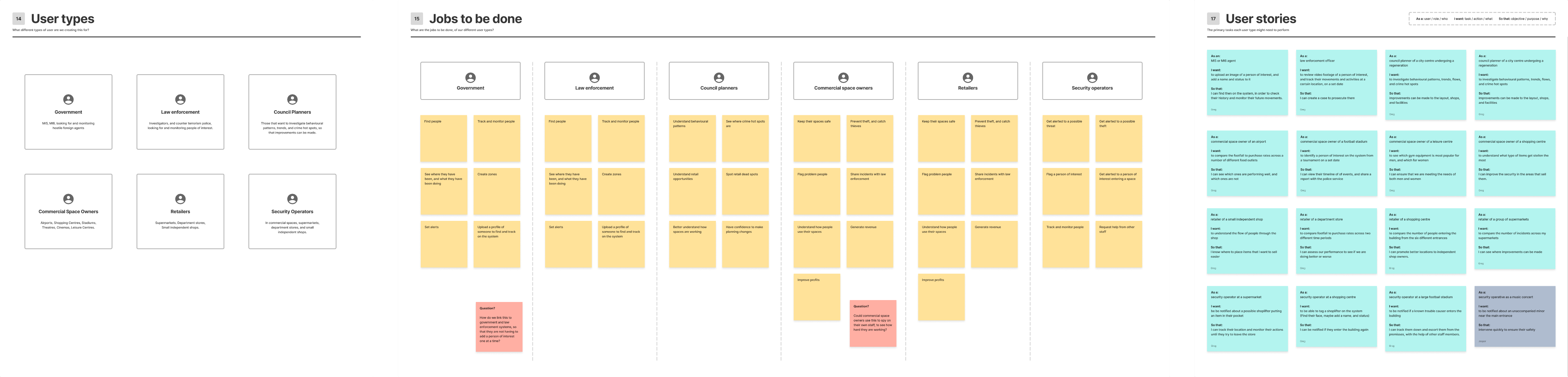

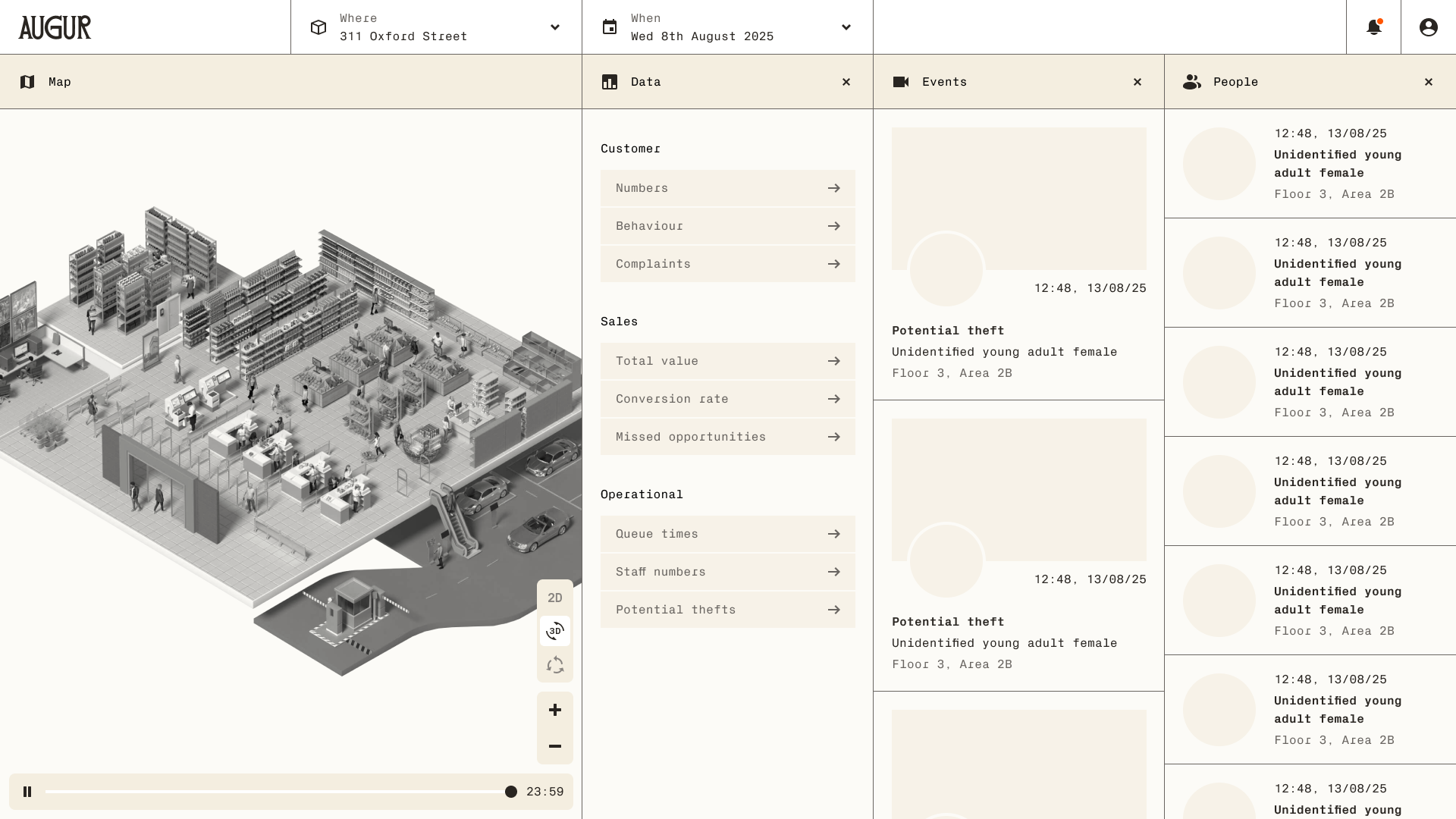

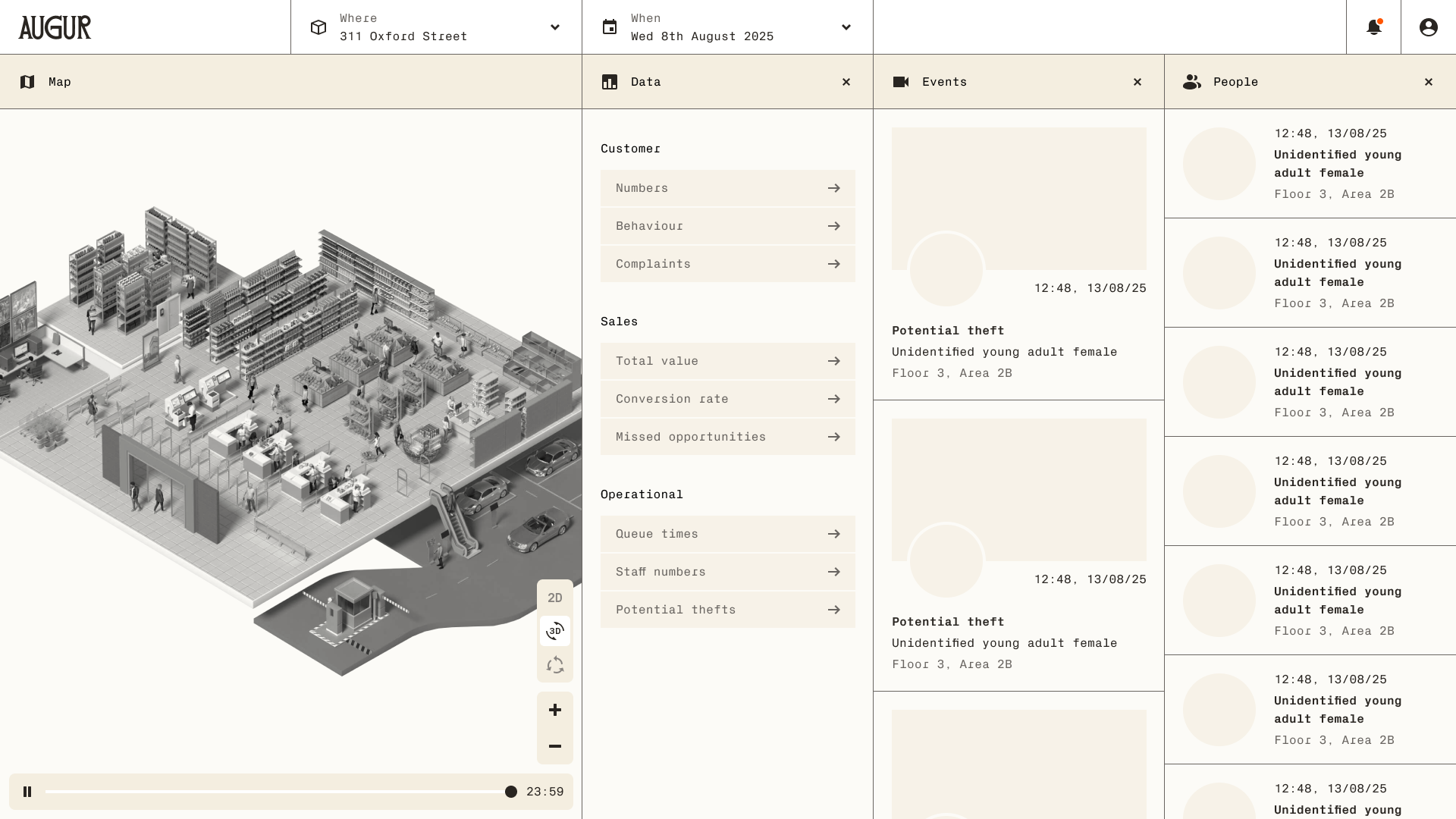

The final product centred around a spatial + temporal interface, allowing users to explore environments across time.

Space navigation

Kings Road

Where

Select and move through physical environments

Time control

Yesterday morning

When

Scrub backward and forward through events

Entity tracking

12:48

Unidentified young female

Floor 3

Identify and follow individuals across a space

Event detection

12:48

Potential theft

Unidentified young female

Floor 3, Area 2B

View AI-triggered incidents and alerts

Pattern recognition

Surface behavioural insights over time

Multi-view environments

Switch between 2D, 3D, and immersive views

Handling complexity

One of the core challenges was balancing:

- High data density

- Real-time + historical interaction

- Multiple mental models (space, time, people, events, data)

I addressed this by:

- Structuring the UI around clear layers (space, time, people, events, data)

- Using progressive disclosure to reduce overload

- Designing intuitive controls for time and navigation

- Creating consistent interaction patterns across views

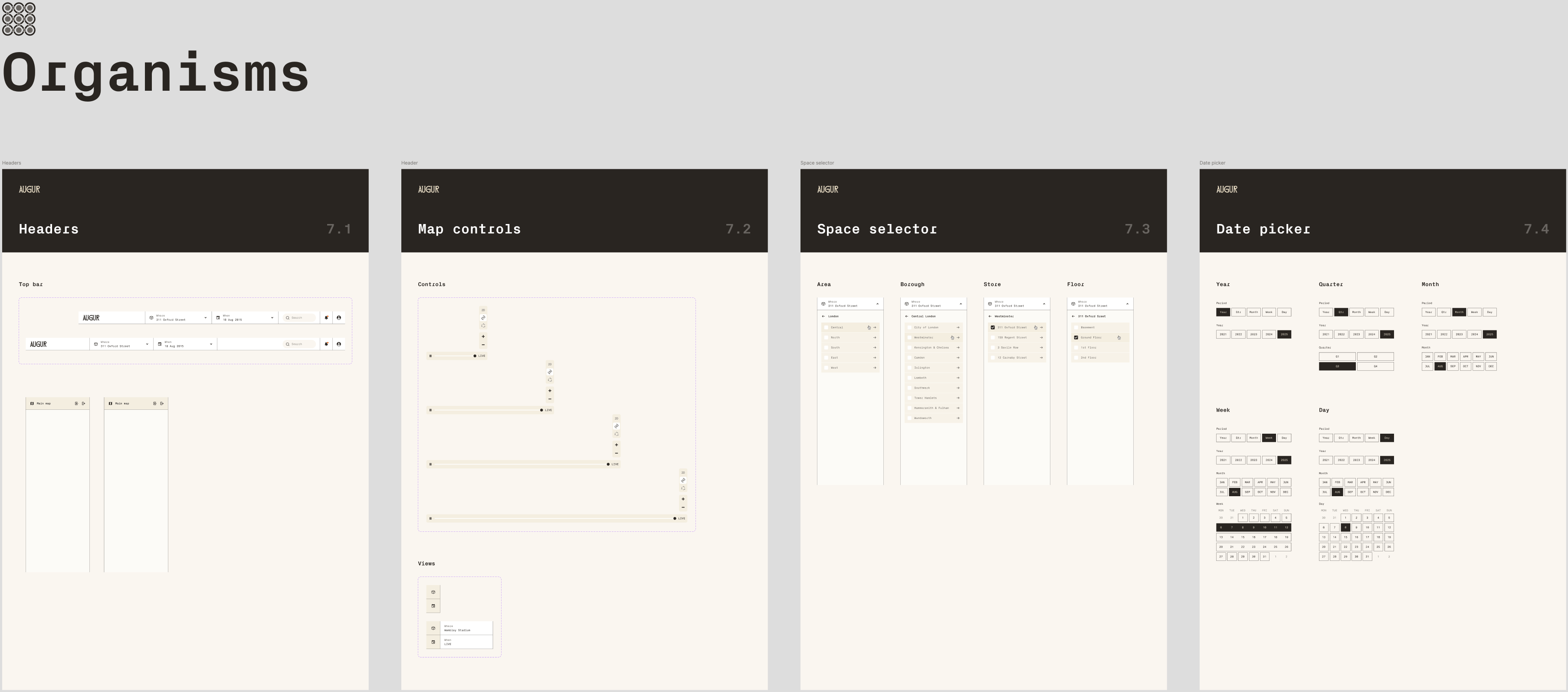

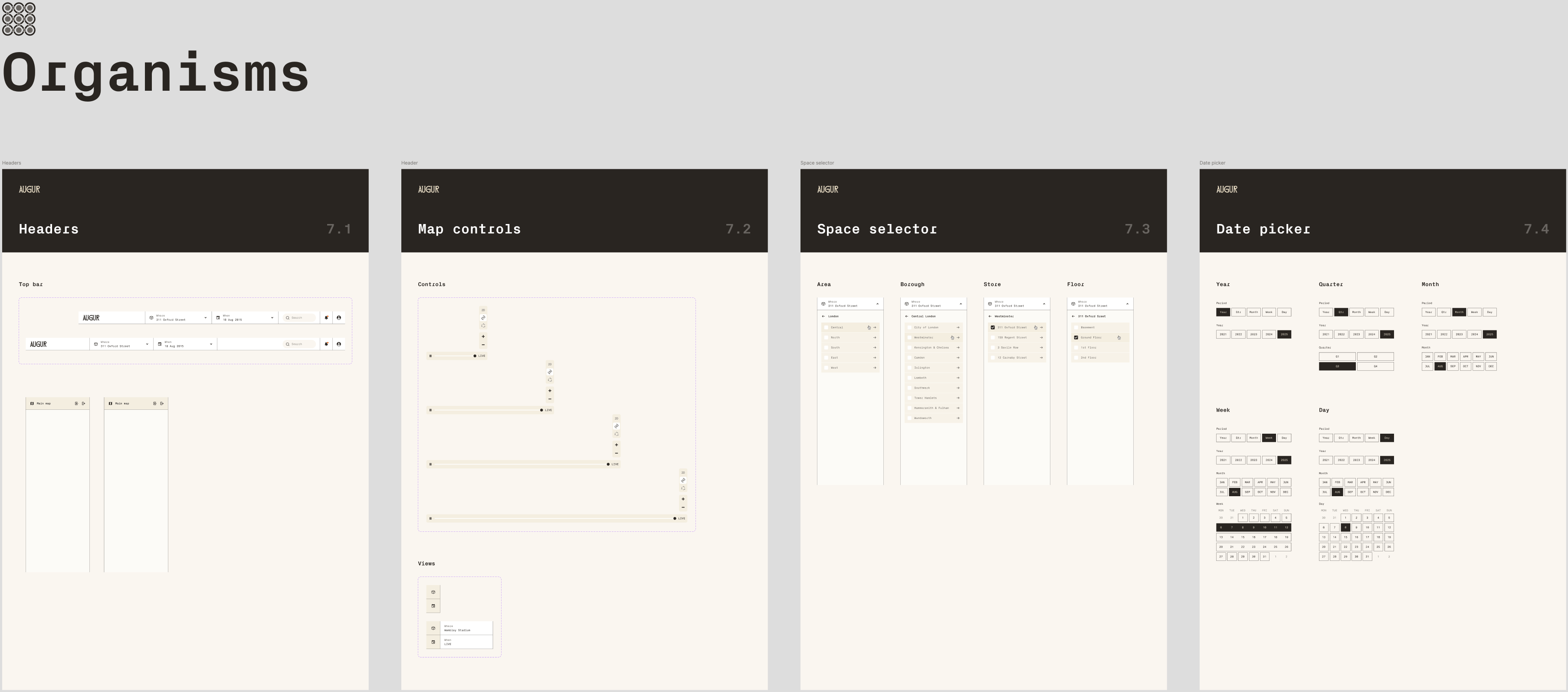

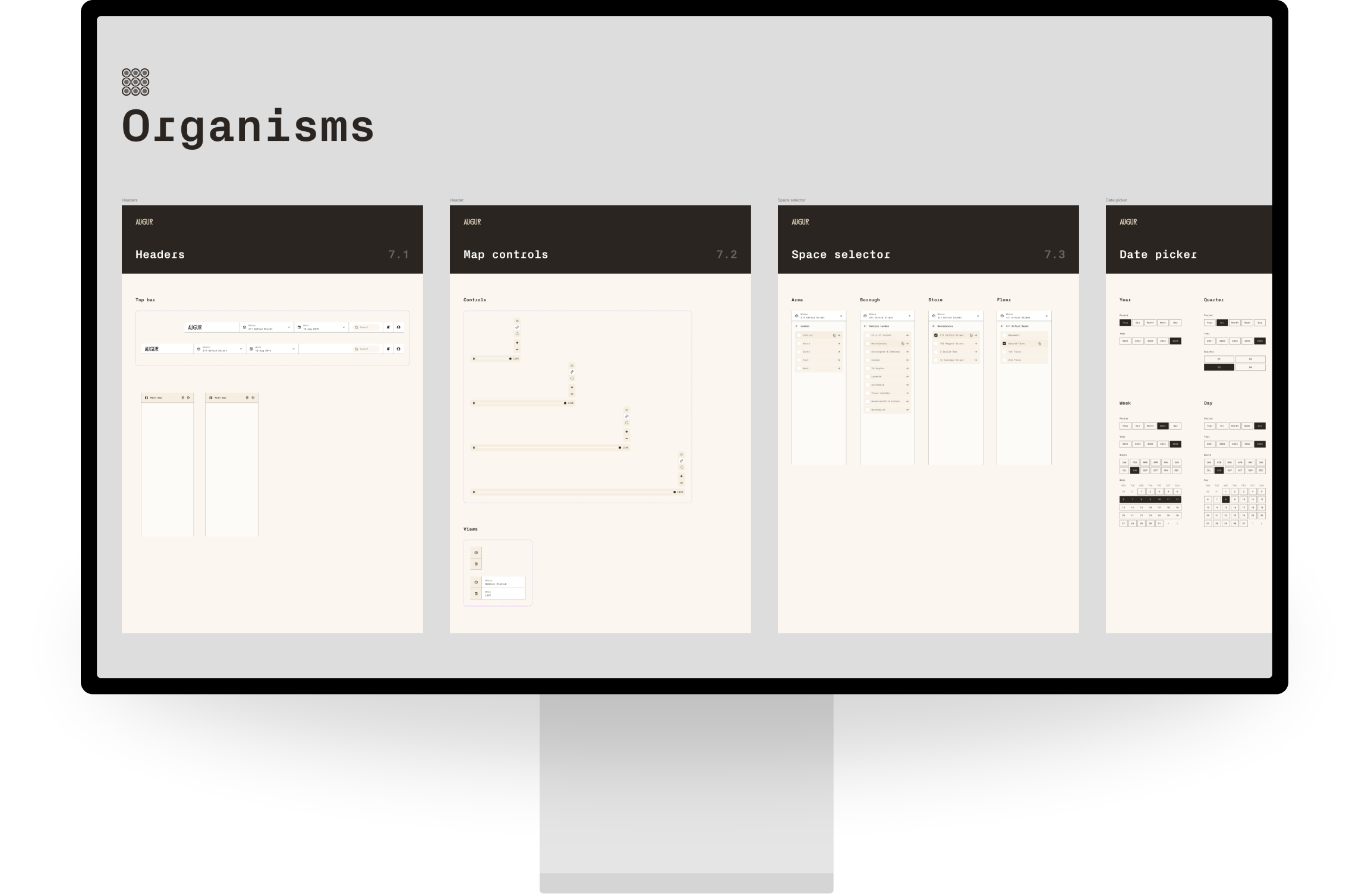

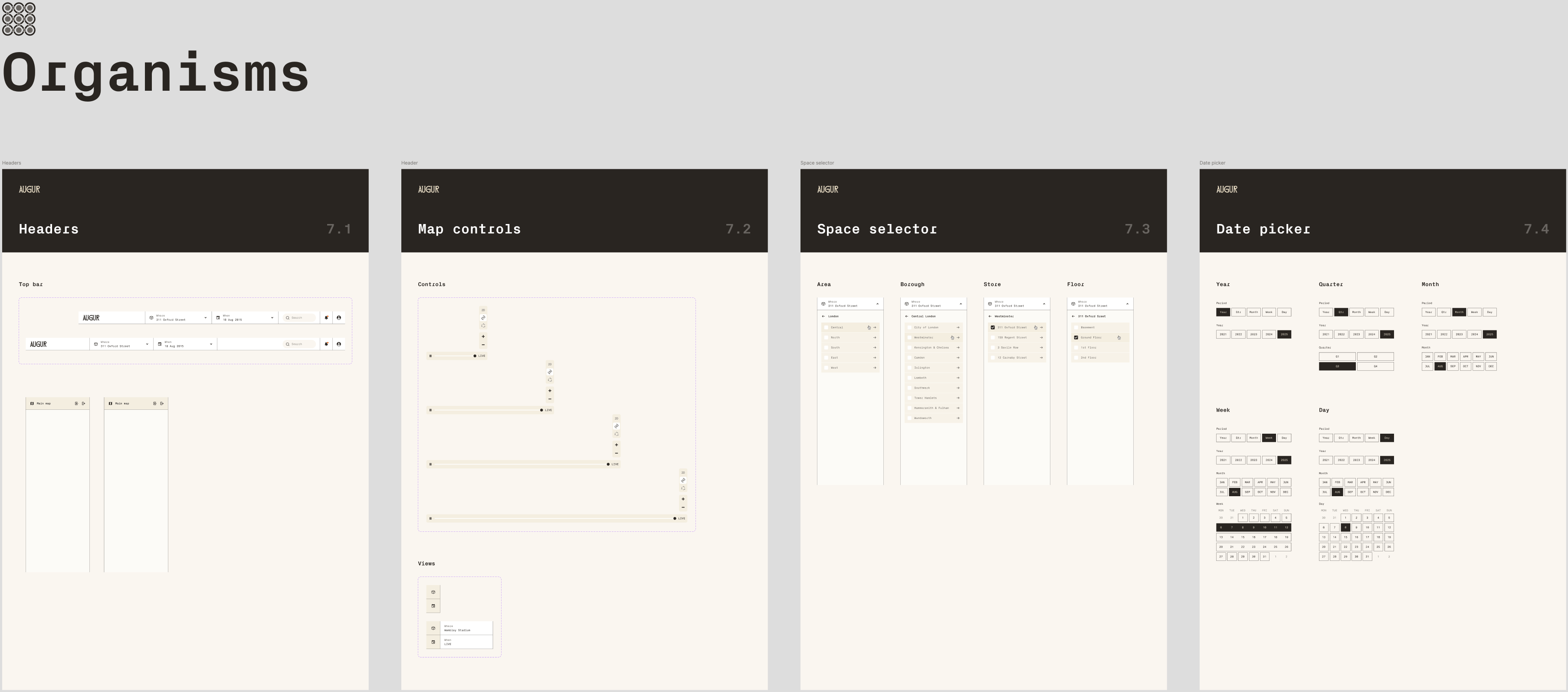

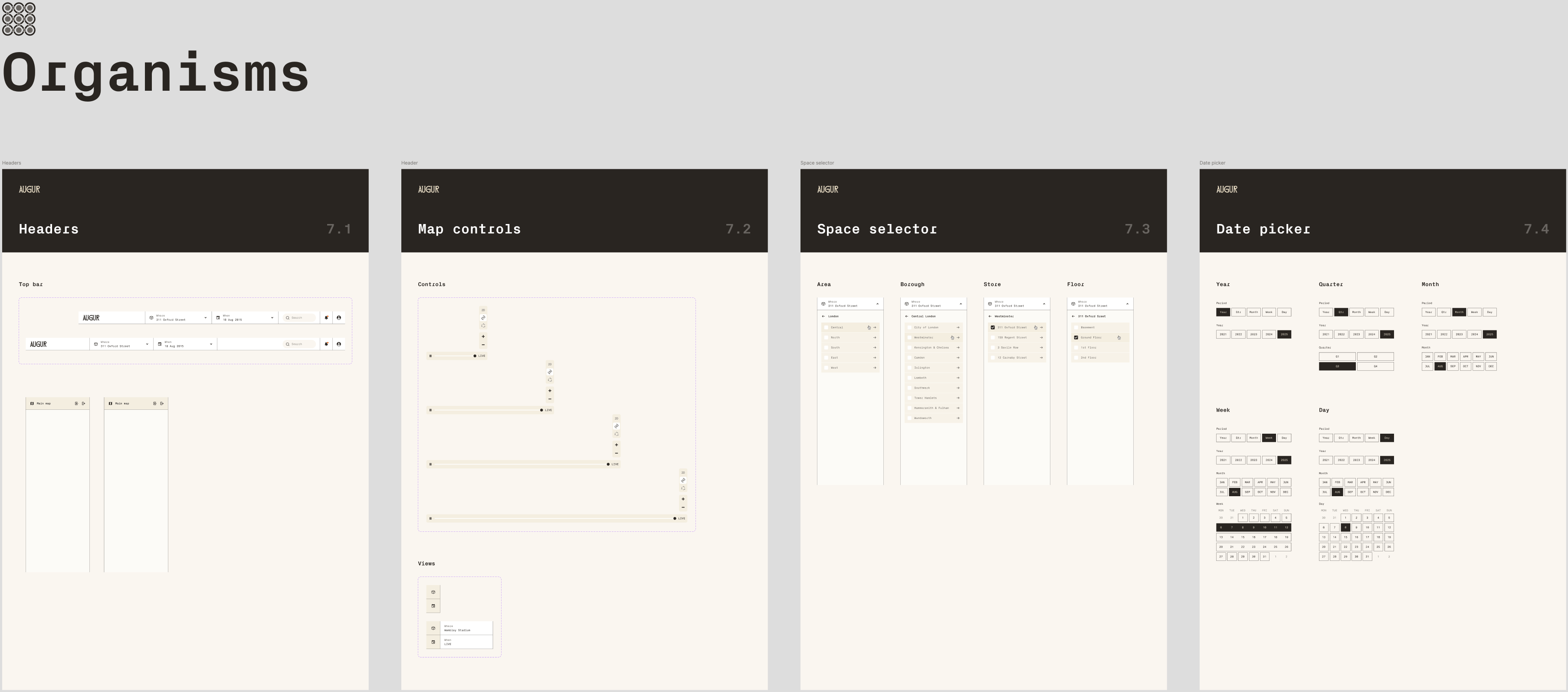

Design system

Starting from a basic brand guideline, I built a scalable design system including:

- Typography and colour foundations

- Component library (navigation, panels, data views)

- Interaction patterns for complex behaviours

The system evolved alongside the product, enabling:

- Faster iteration

- Consistency across features

- Easier collaboration with engineering

The final outcome

The result was a fully designed, demonstrable SaaS platform that:

- Makes AI-generated insights accessible and actionable

- Supports a wide range of environments:

- Commercial spaces

- Transport networks

- Enterprise and government use cases

- Bridges the gap between advanced AI capability and real-world usability

Impact

Contributed to

$15M seed funding

Helped evolve a proof of concept into a viable product

Enabled proactive, insight-driven security workflows

Positioned the company for scaling across industries

Reflection

This project reinforced the importance of:

- Clarity in complex systems

- Designing for multiple dimensions (space + time + behaviour)

- Balancing technical possibility with user understanding

- Driving product thinking at an early-stage startup level

Overview

I joined Augur as the founding product designer, responsible for transforming an early-stage AI proof of concept into a scalable SaaS platform.

Augur applies artificial intelligence to existing CCTV infrastructure, enabling automated detection of people, events, and behavioural patterns—shifting security from reactive monitoring to proactive insight.

My role Founding Product Designer

Scope Product strategy, UX/UI design, design system

Stage Early-stage startup (pre-seed → seed)

Outcome Investor-ready product contributing to $15M seed funding

The problem

Traditional CCTV systems are fundamentally limited:

- They rely on humans to monitor and interpret footage

- Investigations require hours of manual video review

- Insights are lost when footage is deleted

- Systems are reactive, not preventative

The result: Security teams spend more time reviewing the past than preventing the future.

The opportunity

Augur’s technology could:

- Detect people, behaviours, and patterns automatically

- Surface real-time alerts and insights

- Turn static footage into searchable, queryable data

The challenge was designing a product that could make complex AI outputs usable, intuitive, and actionable.

My role

As the founding designer, I:

- Defined the product vision and UX strategy

- Translated a technical prototype into a usable SaaS platform

- Led discovery, concept exploration, and interaction design

- Worked closely with the founder and engineers

- Built the foundations of a scalable design system

Discovery & Alignment

Step 1: Rapid response

In my first week, I delivered design work to support a live client presentation, quickly getting up to speed with the product and domain.

- Step 2: Defining the problem space

- I facilitated a cross-functional workshop to align the team on:

- What we’re building and why

- Who it’s for

- What success looks like

- Risks, constraints, and opportunities

- We mapped:

- User types (security operators, analysts, business owners)

- Jobs to be done

- Potential applications across industries

Shaping the product

Insights from discovery were translated into:

- User stories

- End-to-end journeys

- Core interaction flows

This helped define a product focused on three key activities:

- Monitoring (what’s happening now?)

- Investigation (what happened?)

- Analysis (what patterns exist?)

Design exploration

I explored 7 different product directions, each tackling how users might:

- Navigate physical spaces

- Interact with time

- Understand AI-detected events

- Move between data and video

We reviewed concepts collaboratively, assessing trade-offs between:

- Simplicity vs capability

- Spatial vs list-based interfaces

- Real-time vs historical interaction models

Designing the core experience

The final product centred around a spatial + temporal interface, allowing users to explore environments across time.

Space navigation

Kings Road

Where

Select and move through physical environments

Time control

Yesterday morning

When

Scrub backward and forward through events

Entity tracking

12:48, 13/08/25

Unidentified young adult female

Floor 3, Area 2B

Identify and follow individuals across a space

Event detection

12:48, 13/08/25

Potential theft

Unidentified young adult female

Floor 3, Area 2B

View AI-triggered incidents and alerts

Pattern recognition

Surface behavioural insights over time

Multi-view environments

Switch between 2D, 3D, and immersive views

Handling complexity

One of the core challenges was balancing:

- High data density

- Real-time + historical interaction

- Multiple mental models (space, time, people, events, data)

I addressed this by:

- Structuring the UI around clear layers (space, time, people, events, data)

- Using progressive disclosure to reduce overload

- Designing intuitive controls for time and navigation

- Creating consistent interaction patterns across views

Design system

Starting from a basic brand guideline, I built a scalable design system including:

- Typography and colour foundations

- Component library (navigation, panels, data views)

- Interaction patterns for complex behaviours

The system evolved alongside the product, enabling:

- Faster iteration

- Consistency across features

- Easier collaboration with engineering

The final outcome

The result was a fully designed, demonstrable SaaS platform that:

- Makes AI-generated insights accessible and actionable

- Supports a wide range of environments:

- Commercial spaces

- Transport networks

- Enterprise and government use cases

- Bridges the gap between advanced AI capability and real-world usability

Impact

Contributed to

$15M seed funding

Helped evolve a proof of concept into a viable product

Enabled proactive, insight-driven security workflows

Positioned the company for scaling across industries

Reflection

This project reinforced the importance of:

- Clarity in complex systems

- Designing for multiple dimensions (space + time + behaviour)

- Balancing technical possibility with user understanding

- Driving product thinking at an early-stage startup level

Overview

I joined Augur as the founding product designer, responsible for transforming an early-stage AI proof of concept into a scalable SaaS platform.

Augur applies artificial intelligence to existing CCTV infrastructure, enabling automated detection of people, events, and behavioural patterns—shifting security from reactive monitoring to proactive insight.

My role Founding Product Designer

Scope Product strategy, UX/UI design, design system

Stage Early-stage startup (pre-seed → seed)

Outcome Investor-ready product contributing to $15M seed funding

The problem

Traditional CCTV systems are fundamentally limited:

- They rely on humans to monitor and interpret footage

- Investigations require hours of manual video review

- Insights are lost when footage is deleted

- Systems are reactive, not preventative

The result: Security teams spend more time reviewing the past than preventing the future.

The opportunity

Augur’s technology could:

- Detect people, behaviours, and patterns automatically

- Surface real-time alerts and insights

- Turn static footage into searchable, queryable data

The challenge was designing a product that could make complex AI outputs usable, intuitive, and actionable.

My role

As the founding designer, I:

- Defined the product vision and UX strategy

- Translated a technical prototype into a usable SaaS platform

- Led discovery, concept exploration, and interaction design

- Worked closely with the founder and engineers

- Built the foundations of a scalable design system

Discovery & Alignment

Step 1: Rapid response

In my first week, I delivered design work to support a live client presentation, quickly getting up to speed with the product and domain.

- Step 2: Defining the problem space

- I facilitated a cross-functional workshop to align the team on:

- What we’re building and why

- Who it’s for

- What success looks like

- Risks, constraints, and opportunities

- We mapped:

- User types (security operators, analysts, business owners)

- Jobs to be done

- Potential applications across industries

Shaping the product

Insights from discovery were translated into:

- User stories

- End-to-end journeys

- Core interaction flows

This helped define a product focused on three key activities:

- Monitoring (what’s happening now?)

- Investigation (what happened?)

- Analysis (what patterns exist?)

Design exploration

I explored 7 different product directions, each tackling how users might:

- Navigate physical spaces

- Interact with time

- Understand AI-detected events

- Move between data and video

We reviewed concepts collaboratively, assessing trade-offs between:

- Simplicity vs capability

- Spatial vs list-based interfaces

- Real-time vs historical interaction models

Designing the core experience

The final product centred around a spatial + temporal interface, allowing users to explore environments across time.

Space navigation

Kings Road

Where

Select and move through physical environments

Time control

Yesterday morning

When

Scrub backward and forward through events

Entity tracking

12:48, 13/08/25

Unidentified young adult female

Floor 3, Area 2B

Identify and follow individuals across a space

Event detection

12:48, 13/08/25

Potential theft

Unidentified young adult female

Floor 3, Area 2B

View AI-triggered incidents and alerts

Pattern recognition

Surface behavioural insights over time

Multi-view environments

Switch between 2D, 3D, and immersive views

Handling complexity

One of the core challenges was balancing:

- High data density

- Real-time + historical interaction

- Multiple mental models (space, time, people, events, data)

I addressed this by:

- Structuring the UI around clear layers (space, time, people, events, data)

- Using progressive disclosure to reduce overload

- Designing intuitive controls for time and navigation

- Creating consistent interaction patterns across views

Design system

Starting from a basic brand guideline, I built a scalable design system including:

- Typography and colour foundations

- Component library (navigation, panels, data views)

- Interaction patterns for complex behaviours

The system evolved alongside the product, enabling:

- Faster iteration

- Consistency across features

- Easier collaboration with engineering

The final outcome

The result was a fully designed, demonstrable SaaS platform that:

- Makes AI-generated insights accessible and actionable

- Supports a wide range of environments:

- Commercial spaces

- Transport networks

- Enterprise and government use cases

- Bridges the gap between advanced AI capability and real-world usability

Impact

Contributed to

$15M seed funding

Helped evolve a proof of concept into a viable product

Enabled proactive, insight-driven security workflows

Positioned the company for scaling across industries

Reflection

This project reinforced the importance of:

- Clarity in complex systems

- Designing for multiple dimensions (space + time + behaviour)

- Balancing technical possibility with user understanding

- Driving product thinking at an early-stage startup level

Overview

I joined Augur as the founding product designer, responsible for transforming an early-stage AI proof of concept into a scalable SaaS platform.

Augur applies artificial intelligence to existing CCTV infrastructure, enabling automated detection of people, events, and behavioural patterns—shifting security from reactive monitoring to proactive insight.

My role Founding Product Designer

Scope Product strategy, UX/UI design, design system

Stage Early-stage startup (pre-seed → seed)

Outcome Investor-ready product contributing to $15M seed funding

The problem

Traditional CCTV systems are fundamentally limited:

- They rely on humans to monitor and interpret footage

- Investigations require hours of manual video review

- Insights are lost when footage is deleted

- Systems are reactive, not preventative

The result: Security teams spend more time reviewing the past than preventing the future.

The opportunity

Augur’s technology could:

- Detect people, behaviours, and patterns automatically

- Surface real-time alerts and insights

- Turn static footage into searchable, queryable data

The challenge was designing a product that could make complex AI outputs usable, intuitive, and actionable.

My role

As the founding designer, I:

- Defined the product vision and UX strategy

- Translated a technical prototype into a usable SaaS platform

- Led discovery, concept exploration, and interaction design

- Worked closely with the founder and engineers

- Built the foundations of a scalable design system

Discovery & Alignment

Step 1: Rapid response

In my first week, I delivered design work to support a live client presentation, quickly getting up to speed with the product and domain.

Step 2: Defining the problem space

I facilitated a cross-functional workshop to align the team on:

- What we’re building and why

- Who it’s for

- What success looks like

- Risks, constraints, and opportunities

We mapped:

- User types (security operators, analysts, business owners)

- Jobs to be done

- Potential applications across industries

Shaping the product

Insights from discovery were translated into:

- User stories

- End-to-end journeys

- Core interaction flows

This helped define a product focused on three key activities:

- Monitoring (what’s happening now?)

- Investigation (what happened?)

- Analysis (what patterns exist?)

Design exploration

I explored 7 different product directions, each tackling how users might:

- Navigate physical spaces

- Interact with time

- Understand AI-detected events

- Move between data and video

We reviewed concepts collaboratively, assessing trade-offs between:

- Simplicity vs capability

- Spatial vs list-based interfaces

- Real-time vs historical interaction models

Designing the core experience

The final product centred around a spatial + temporal interface, allowing users to explore environments across time.

Space navigation

Kings Road

Where

Select and move through physical environments

Time control

Yesterday morning

When

Scrub backward and forward through events

Entity tracking

12:48, 13/08/25

Unidentified young adult female

Floor 3, Area 2B

Identify and follow individuals across a space

Event detection

12:48, 13/08/25

Potential theft

Unidentified young adult female

Floor 3, Area 2B

View AI-triggered incidents and alerts

Pattern recognition

Surface behavioural insights over time

Multi-view environments

Switch between 2D, 3D, and immersive views

Handling complexity

One of the core challenges was balancing:

- High data density

- Real-time + historical interaction

- Multiple mental models (space, time, people, events, data)

I addressed this by:

- Structuring the UI around clear layers (space, time, people, events, data)

- Using progressive disclosure to reduce overload

- Designing intuitive controls for time and navigation

- Creating consistent interaction patterns across views

Design system

Starting from a basic brand guideline, I built a scalable design system including:

- Typography and colour foundations

- Component library (navigation, panels, data views)

- Interaction patterns for complex behaviours

The system evolved alongside the product, enabling:

- Faster iteration

- Consistency across features

- Easier collaboration with engineering

The final outcome

The result was a fully designed, demonstrable SaaS platform that:

- Makes AI-generated insights accessible and actionable

- Supports a wide range of environments:

- Commercial spaces

- Transport networks

- Enterprise and government use cases

- Bridges the gap between advanced AI capability and real-world usability

Impact

Contributed to

$15M seed funding

Helped evolve a proof of concept into a viable product

Enabled proactive, insight-driven security workflows

Positioned the company for scaling across industries

Reflection

This project reinforced the importance of:

- Clarity in complex systems

- Designing for multiple dimensions (space + time + behaviour)

- Balancing technical possibility with user understanding

- Driving product thinking at an early-stage startup level

Overview

I joined Augur as the founding product designer, responsible for transforming an early-stage AI proof of concept into a scalable SaaS platform.

Augur applies artificial intelligence to existing CCTV infrastructure, enabling automated detection of people, events, and behavioural patterns—shifting security from reactive monitoring to proactive insight.

My role Founding Product Designer

Scope Product strategy, UX/UI design, design system

Stage Early-stage startup (pre-seed → seed)

Outcome Investor-ready product contributing to $15M seed funding

The problem

Traditional CCTV systems are fundamentally limited:

- They rely on humans to monitor and interpret footage

- Investigations require hours of manual video review

- Insights are lost when footage is deleted

- Systems are reactive, not preventative

The result: Security teams spend more time reviewing the past than preventing the future.

The opportunity

Augur’s technology could:

- Detect people, behaviours, and patterns automatically

- Surface real-time alerts and insights

- Turn static footage into searchable, queryable data

The challenge was designing a product that could make complex AI outputs usable, intuitive, and actionable.

My role

As the founding designer, I:

- Defined the product vision and UX strategy

- Translated a technical prototype into a usable SaaS platform

- Led discovery, concept exploration, and interaction design

- Worked closely with the founder and engineers

- Built the foundations of a scalable design system

Discovery & Alignment

Step 1: Rapid response

In my first week, I delivered design work to support a live client presentation, quickly getting up to speed with the product and domain.

- Step 2: Defining the problem space

- I facilitated a cross-functional workshop to align the team on:

- What we’re building and why

- Who it’s for

- What success looks like

- Risks, constraints, and opportunities

- We mapped:

- User types (security operators, analysts, business owners)

- Jobs to be done

- Potential applications across industries

Shaping the product

Insights from discovery were translated into:

- User stories

- End-to-end journeys

- Core interaction flows

This helped define a product focused on three key activities:

- Monitoring (what’s happening now?)

- Investigation (what happened?)

- Analysis (what patterns exist?)

Design exploration

I explored 7 different product directions, each tackling how users might:

- Navigate physical spaces

- Interact with time

- Understand AI-detected events

- Move between data and video

We reviewed concepts collaboratively, assessing trade-offs between:

- Simplicity vs capability

- Spatial vs list-based interfaces

- Real-time vs historical interaction models

Designing the core experience

The final product centred around a spatial + temporal interface, allowing users to explore environments across time.

Space navigation

Kings Road

Where

Select and move through physical environments

Time control

Yesterday morning

When

Scrub backward and forward through events

Entity tracking

12:48, 13/08/25

Unidentified young adult female

Floor 3, Area 2B

Identify and follow individuals across a space

Event detection

12:48, 13/08/25

Potential theft

Unidentified young adult female

Floor 3, Area 2B

View AI-triggered incidents and alerts

Pattern recognition

Surface behavioural insights over time

Multi-view environments

Switch between 2D, 3D, and immersive views

Handling complexity

One of the core challenges was balancing:

- High data density

- Real-time + historical interaction

- Multiple mental models (space, time, people, events, data)

I addressed this by:

- Structuring the UI around clear layers (space, time, people, events, data)

- Using progressive disclosure to reduce overload

- Designing intuitive controls for time and navigation

- Creating consistent interaction patterns across views

Design system

Starting from a basic brand guideline, I built a scalable design system including:

- Typography and colour foundations

- Component library (navigation, panels, data views)

- Interaction patterns for complex behaviours

The system evolved alongside the product, enabling:

- Faster iteration

- Consistency across features

- Easier collaboration with engineering

The final outcome

The result was a fully designed, demonstrable SaaS platform that:

- Makes AI-generated insights accessible and actionable

- Supports a wide range of environments:

- Commercial spaces

- Transport networks

- Enterprise and government use cases

- Bridges the gap between advanced AI capability and real-world usability

Impact

Contributed to

$15M seed funding

Helped evolve a proof of concept into a viable product

Enabled proactive, insight-driven security workflows

Positioned the company for scaling across industries

Reflection

This project reinforced the importance of:

- Clarity in complex systems

- Designing for multiple dimensions (space + time + behaviour)

- Balancing technical possibility with user understanding

- Driving product thinking at an early-stage startup level